APA Style

Linus Tabari, Kate Takyi, Rose-Mary Owusuaa Mensah Gyening. (2026). Attention-Based LSTM for Sign Language Recognition Leveraging Spatial-Temporal Keypoint. Computing&AI Connect, 3 (Article ID: 0034). https://doi.org/Registering DOIMLA Style

Linus Tabari, Kate Takyi, Rose-Mary Owusuaa Mensah Gyening. "Attention-Based LSTM for Sign Language Recognition Leveraging Spatial-Temporal Keypoint". Computing&AI Connect, vol. 3, 2026, Article ID: 0034, https://doi.org/Registering DOI.Chicago Style

Linus Tabari, Kate Takyi, Rose-Mary Owusuaa Mensah Gyening. 2026. "Attention-Based LSTM for Sign Language Recognition Leveraging Spatial-Temporal Keypoint." Computing&AI Connect 3 (2026): 0034. https://doi.org/Registering DOI.

ACCESS

Research Article

ACCESS

Research Article

Volume 3, Article ID: 2026.0034

Linus Tabari

tabarilinus18@gmail.com

Kate Takyi

takyikate@knust.edu.gh

Rose-Mary Owusuaa Mensah Gyening

rmo.mensah@knust.edu.gh

Department of Computer Science, Faculty of Computational and Physical Sciences, Kwame Nkrumah University of Science and Technology, Kumasi, Ghana

* Author to whom correspondence should be addressed

Received: 01 Nov 2025 Accepted: 13 Apr 2026 Available Online: 14 Apr 2026

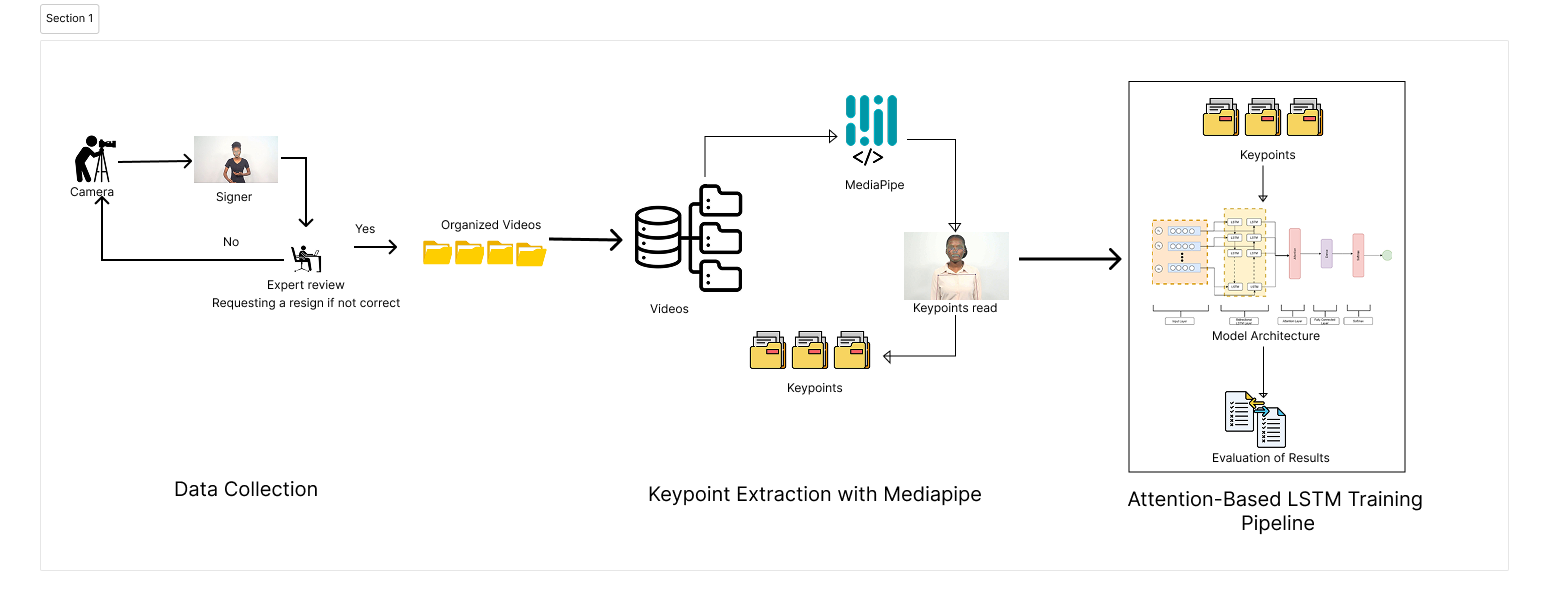

Sign language is a crucial means of communication for the Deaf and hard-of-hearing communities. Most individuals find it challenging to communicate with the deaf when they try to do so without an interpreter. The advancement in technology, computer vision, and deep learning approaches provides a different approach to tackling the problem. Literature indicates that the unique nature of Ghanaian Sign Language (GSL) has been understudied due to a lack of large and publicly available datasets, as well as limited research on the use of landmark keypoints for computational research on GSL. This study curated a large video-based dataset, AkwaabaSign, that reflects the indigenous nature of the GSL. The study employed two different baseline models to assess the dataset: an Attention-enhanced LSTM model and a ConvLSTM model, which extracted and specially normalized the keypoints using Mediapipe. With this approach, the attention-enhanced LSTM achieved a test accuracy of 94.69%, with balanced performance metrics of 93.32% precision, 92.70% recall, and 92.66% F1-score. The ConvLSTM achieved 90.28% accuracy, lagging behind the attention-enhanced LSTM. The study fulfils the aim of producing a large dataset for sign language recognition, provides a specialized normalization process for dataset processing, and establishes a base model for the practical use of the dataset. The proposed model also outperforms some other algorithms in the domain of sign language computational works in GSL. The study aims to expand the dataset to the sentence level and develop continuous GSL recognition.

Disclaimer: This is not the final version of the article. Changes may occur when the manuscript is published in its final format.

We use cookies to improve your experience on our site. By continuing to use our site, you accept our use of cookies. Learn more